Passive Recon Tool

TL;DR: Passive OSINT pipeline that maps a domain’s attack surface without direct interaction, correlates multi-source data, and generates a structured risk-based security report.

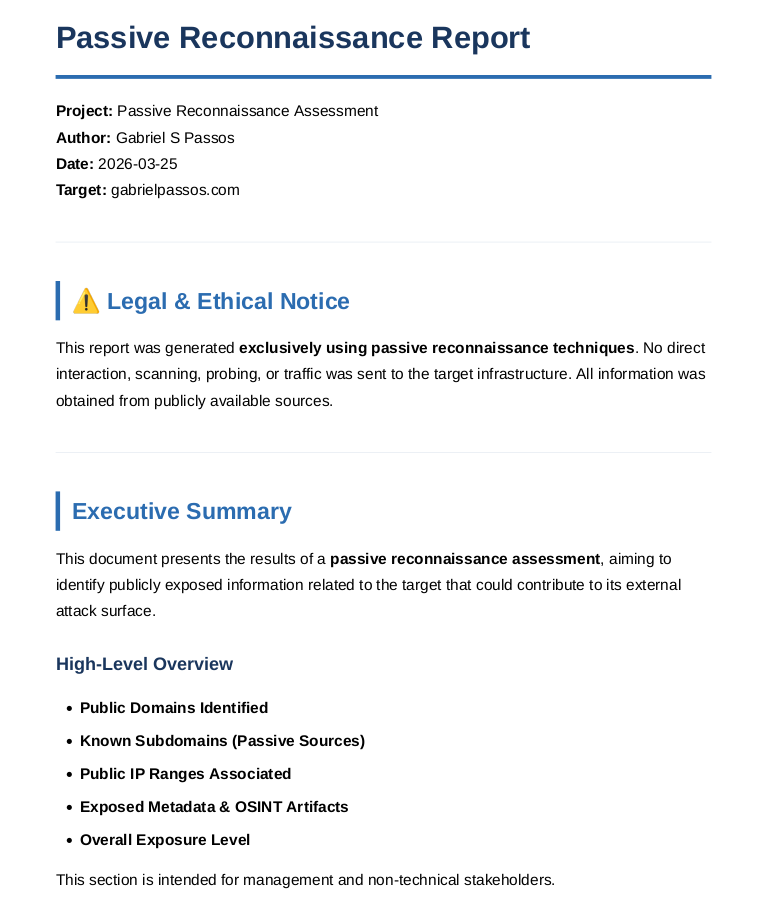

Report First Page

I developed a passive reconnaissance pipeline capable of mapping a target domain’s attack surface using only publicly available data (OSINT).

Unlike traditional scanning approaches, this tool performs zero direct interaction with the target, eliminating detection risks while still providing meaningful security insights.

The system aggregates data from multiple external sources, correlates findings, and transforms fragmented information into a structured and actionable security report.

This project simulates a real-world reconnaissance phase used in penetration testing and attack surface analysis, focusing on scalability, automation, and low-noise information gathering.

This approach ensures:

- No direct contact with the target

- No legal or ethical risks

- Real-world applicability in reconnaissance phases

Technical Decisions

Several design choices were made to ensure reliability, scalability, and data consistency:

VirusTotal (Passive DNS):

Selected for historical DNS resolution data, allowing timeline-based infrastructure analysis and identification of IP reuse.

The implementation deduplicates records and tracks first/last seen timestamps.crt.sh (Certificate Transparency):

Used to enumerate subdomains from SSL certificates, enabling discovery of hidden or legacy assets often missed by DNS-based methods.

Wildcard certificates are handled separately to avoid noise.Threaded Resolution (Concurrency):

Subdomain and infrastructure validation use multithreading to significantly reduce execution time.Data Normalization Layer:

WHOIS data is normalized into a structured domain model to handle inconsistent formats across registrars.Deduplication Strategy:

DNS records, certificates, and subdomains are aggregated using key-based correlation to avoid redundant entries.Resilience Handling:

API failures and timeouts are handled, ensuring the pipeline continues execution even with partial data.Modular Architecture:

Each stage (collection, processing, enrichment, reporting) is isolated, enabling easy extension and maintenance.

Objectives

This project was built with the following goals:

- Automate passive reconnaissance workflows

- Aggregate multiple OSINT sources into a single pipeline

- Identify security-relevant patterns in public data

- Generate a clean and readable report for analysis

- Simulate a real-world reconnaissance phase used in pentesting

Key Concepts

This project explores important cybersecurity concepts:

- Passive Reconnaissance (OSINT)

- Attack Surface Mapping

- Certificate Transparency

- Metadata Exposure

- Infrastructure Enumeration

- Risk Assessment Modeling

Architecture

The tool follows a modular pipeline:

Pipeline Diagram

[Input Layer]

└── Target Domain

[Collection Layer]

├── WHOIS Collection

├── Passive DNS (VirusTotal API)

├── Certificate Transparency (crt.sh)

└── Wayback Machine (Document Discovery)

[Processing Layer]

├── Data Normalization (WHOIS parsing)

├── Deduplication (DNS, certs, subs)

├── Timeline Correlation (first_seen / last_seen)

└── Metadata Classification

[Enrichment Layer]

├── IP Intelligence (IPInfo API)

└── Active/Inactive Resolution (Threaded DNS lookup)

[Analysis Layer]

├── Subdomain Risk Detection (keyword-based)

├── Metadata Exposure Analysis

├── Infrastructure Sizing

└── Domain Lifecycle Analysis

[Scoring Engine]

└── Weighted Risk Model (Low/Medium/High → Score → Average)

[Output Layer]

└── HTML Report Generator

External APIs Used

Project Structure

.

├── recon.py # Main execution script

├── passive.py # OSINT data collection

├── data_filter.py # WHOIS data processing

├── domain.py # Domain data model

├── pdfgenerator.py # Report generation

├── requirements.txt

└── template/

└── report_template_passive_css.html

Data Collection Techniques

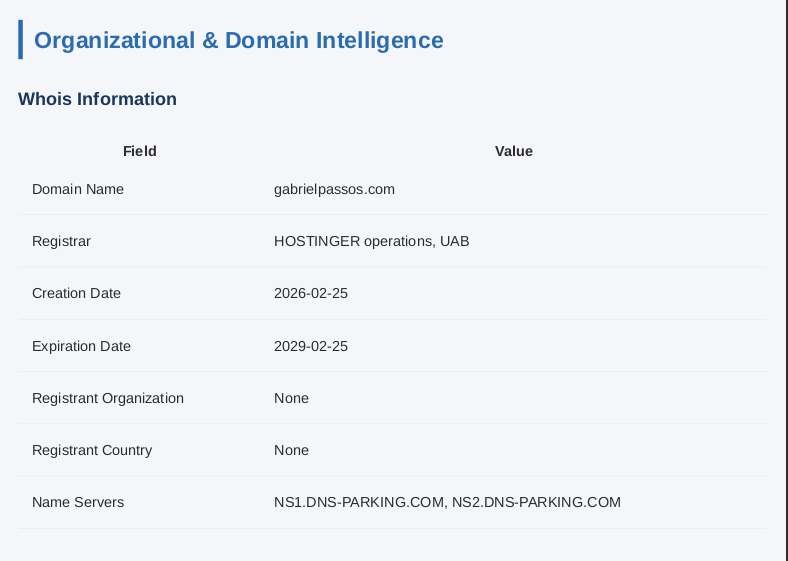

1. WHOIS Analysis

Used to extract:

- Registrar information

- Domain lifecycle dates

- Organization details

Whois Section

Security insight: Missing or obfuscated data may indicate privacy protection or misconfiguration.

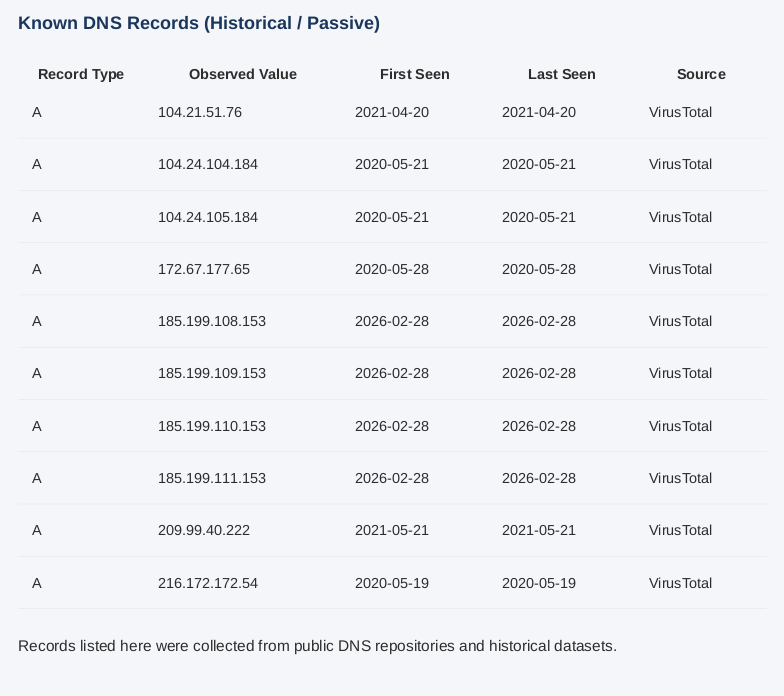

2. Passive DNS

Collected via VirusTotal API:

- Historical IP resolutions

- Infrastructure mapping

Security insight: Helps identify infrastructure changes and potential shared hosting risks.

DNS Records Section

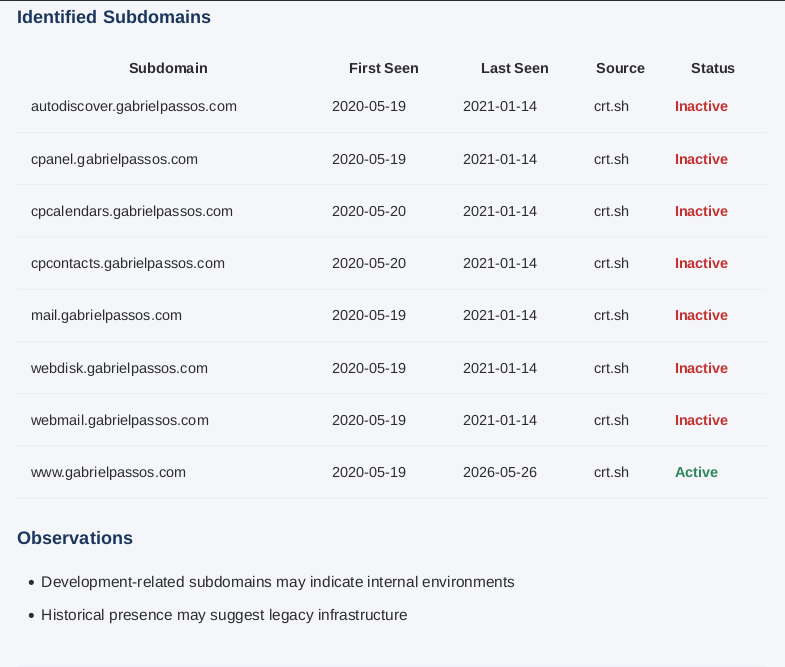

3. Subdomain Enumeration

Using certificate transparency logs (crt.sh):

- Discovers hidden or forgotten subdomains

Identified Subs Section

Security insight:

Subdomains like dev, test, or admin may expose sensitive environments.

Analyzing the collected data reveals domain reuse of gabrielpassos.com through the observed dates.

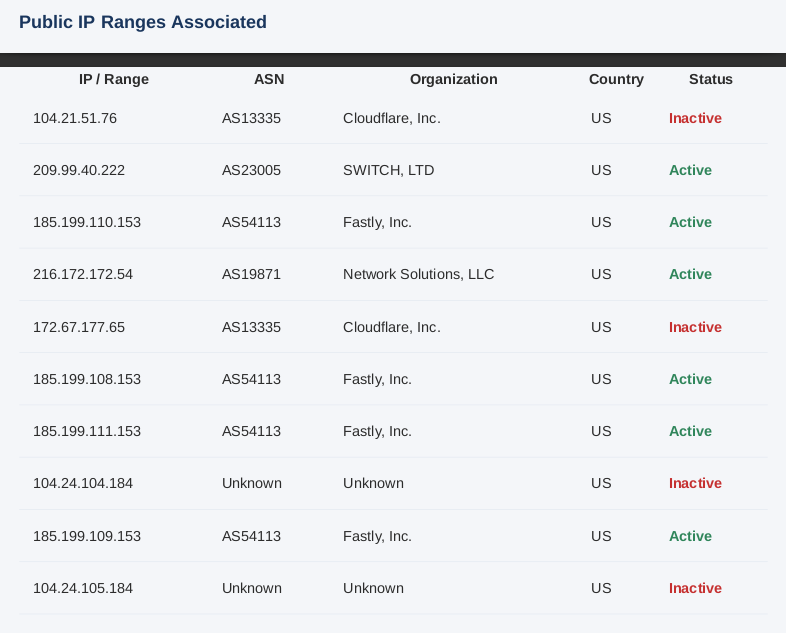

4. Infrastructure Analysis

IP enrichment using external APIs:

- ASN

- Organization

- Country

Identified IP’s Section

Security insight: Expands the attack surface visibility.

5. Public Document Discovery

Wayback Machine is used to find:

- PDFs

- DOC/DOCX files

Security insight: Documents may expose:

- Internal usernames

- Software versions

- Sensitive metadata

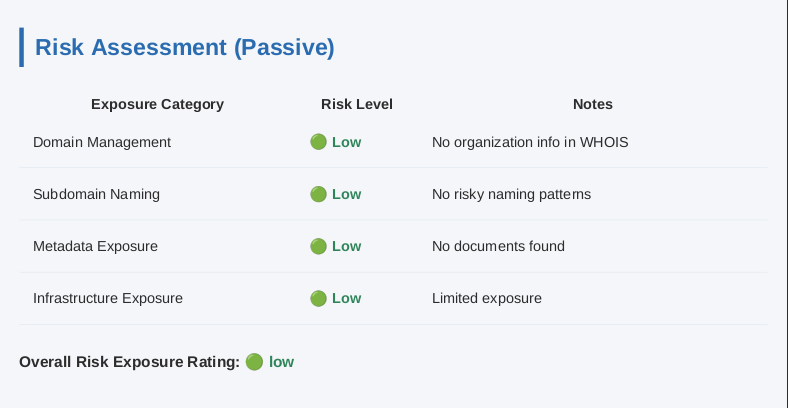

Risk Assessment Model

The tool implements a heuristic-based risk scoring system that evaluates multiple aspects of the target:

Domain Management:

Based on expiration proximity and WHOIS completenessSubdomain Exposure:

Detection of sensitive naming patterns such as dev, test, admin, and internalMetadata Exposure:

Volume of publicly accessible documents and potential information leakageInfrastructure Exposure: Size and distribution of the identified infrastructure

Risk Assessment Section

Each category is classified as Low, Medium, or High, and assigned a numerical score:

- Low = 1

- Medium = 2

- High = 3

The overall risk is calculated using an average-based scoring model:

- ≥ 2.5 → High

- ≥ 1.5 → Medium

- < 1.5 → Low

This approach provides a simple but effective way to prioritize potential risks based on aggregated indicators.

Results

The tool successfully automates the reconnaissance workflow and produces a structured security report in a short time frame.

Example outcomes include:

- Identification of multiple subdomains through certificate transparency logs

- Detection of active vs inactive assets via DNS resolution

- Discovery of publicly accessible documents from archived sources

- Correlation of infrastructure data (ASN, organization, geolocation)

- Automated classification of risk across multiple categories

Execution is optimized through concurrency, allowing multiple enrichment and validation tasks to run in parallel, significantly reducing total runtime.

The final output is a comprehensive HTML report that consolidates all findings into a readable and actionable format.

Limitations

While effective, the tool has some limitations:

- Relies on third-party APIs, which may introduce rate limits or incomplete data

- Passive-only approach may miss assets not exposed through public sources

- WHOIS data inconsistency across registrars can affect accuracy

- Risk scoring is heuristic-based and does not replace manual analysis

These limitations reflect real-world constraints of passive reconnaissance techniques.

Output

The final output is an HTML report containing:

- WHOIS data

- DNS records

- Subdomains (active/inactive)

- Certificates

- Infrastructure details

- Metadata findings

- Risk assessment

Key Challenges

During development, some challenges included:

- Handling inconsistent WHOIS formats

- Normalizing data from multiple APIs

- Avoiding duplicate records

- Managing API failures and timeouts

- Designing a clean and readable report

What I Learned

This project helped me improve:

- OSINT data correlation

- Secure data handling

- Writing modular Python code

- Designing analysis pipelines

- Thinking like an attacker during recon

Future Improvements

Planned enhancements:

- Integration with additional OSINT sources

- PDF export support

- Improved risk scoring model

- Web-based interface

- Caching for faster execution

Ethical Considerations

This tool operates strictly in a passive manner, meaning:

- No direct interaction with the target

- No scanning or exploitation

- Only publicly available data is used

Conclusion

This project demonstrates how publicly available data can be systematically leveraged to map a target’s attack surface without active interaction.

Beyond data collection, the focus was on building a structured pipeline capable of:

- Correlating multiple OSINT sources

- Reducing noise through normalization and deduplication

- Automating analysis and reporting

- Providing actionable security insights

This work reflects my ability to design scalable reconnaissance workflows and think in terms of attack surface analysis, a critical component in modern cybersecurity operations.